Beyond Sectors: Mapping the Market with Algorithmic Clustering Strategies

- The Failure of Sector-Based Allocation

- The Role of Unsupervised Learning

- K-Means: Centroid-Based Asset Grouping

- Hierarchical Architectures and Dendrograms

- DBSCAN: Identifying Market Anomalies

- Gaussian Mixtures and Regime Detection

- Overcoming the Curse of Dimensionality

- Clustering for Diversification and Risk

- The Technical Quant Stack

Traditional asset management relies on static classifications like the Global Industry Classification Standard (GICS). Portfolio managers typically group companies by their primary business activity—Tech, Energy, or Finance. However, in the high-velocity world of modern quantitative finance, these labels often fail to capture the true statistical behavior of an asset. During a market crisis, a technology stock might trade more like a gold miner or a defensive utility than its silicon valley peers.

Algorithmic clustering strategies offer a solution by utilizing unsupervised machine learning to group assets based on their return distributions, volatility profiles, and multi-factor sensitivities. Instead of asking what a company sells, these algorithms ask how the asset actually moves. This data-driven approach allows quants to identify hidden correlations, build superior market-neutral portfolios, and detect shifts in market regimes before they become obvious in the price action.

The Quantitative Evolution

While supervised learning predicts a specific target (like a price move), clustering discovers the underlying structure of the data itself. For a US-based institutional fund, this means the ability to identify clusters of assets that respond identically to specific macroeconomic shocks, such as an unexpected interest rate hike or a sudden expansion in the credit spread.

The Role of Unsupervised Learning in Finance

Unsupervised learning serves as the "exploratory" arm of an algorithmic trading suite. Its primary goal is to find patterns in unlabeled data. In finance, this translates to identifying "neighborhoods" of securities that share a common latent factor.

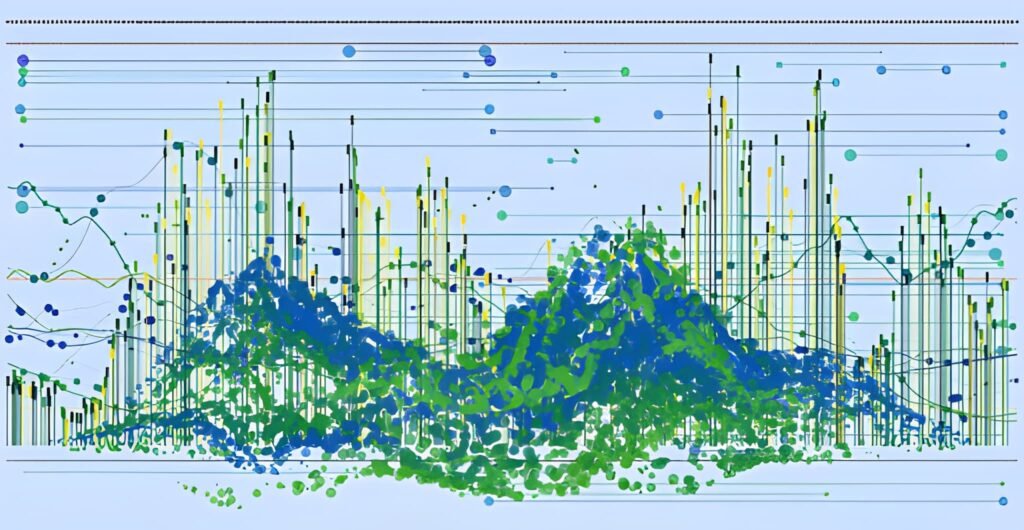

The power of clustering lies in its ability to handle non-linear relationships. Markets rarely follow simple correlations; they exhibit complex, multi-dimensional structures. Clustering algorithms process hundreds of features—ranging from traditional P/E ratios to alternative data like satellite imagery scores—to find the actual bond that ties disparate assets together. This creates a map of the market that is far more accurate than any human-curated index.

K-Means: Centroid-Based Asset Grouping

K-Means is the foundational algorithm for many quantitative desks. It partitions a dataset into "K" distinct clusters by minimizing the variance within each group. In trading, quants use K-Means to group stocks by their volatility-adjusted returns.

The Objective Function of K-Means

The algorithm seeks to minimize the "Inertia" or the Sum of Squared Distances (SSD) between each asset and its assigned cluster centroid.

Minimize = Sum [ (Asset_Position - Centroid_Position) ^ 2 ]Example: A quant scans 500 stocks. The algorithm identifies that 45 of them consistently exhibit high kurtosis and low autocorrelation. It assigns these to a "Momentum Cluster" and calculates the geometric center (centroid) of this group to measure how new assets drift in or out of this category.

Hierarchical Architectures and Dendrograms

Unlike K-Means, which requires the user to specify the number of clusters (K) beforehand, Hierarchical Clustering builds a nested structure of relationships. This is often visualized as a "Dendrogram"—a tree-like diagram that shows exactly at what level of similarity two assets merge.

Most quants prefer the Agglomerative approach, which starts with each stock as its own cluster and merges them based on proximity. This is highly effective for building "Portfolio Trees." A Divisive approach starts with the whole market and splits it into smaller subsets. Agglomerative clustering allows for a granular view of asset "lineage," helping traders understand which stocks are the primary "parents" of a specific market move.

DBSCAN: Identifying Market Anomalies

Density-Based Spatial Clustering of Applications with Noise (DBSCAN) is a superior choice when dealing with non-spherical data clusters. In the financial order book, data often clusters in strange, elongated shapes that K-Means would fail to capture.

More importantly, DBSCAN identifies Outliers as noise. In an algorithmic context, these outliers represent assets that are "disconnected" from the broader market. These could be companies facing unique idiosyncratic risks, or they could represent the start of a massive mispricing opportunity (Alpha). By focusing on the "density" of asset returns, DBSCAN can ignore the random noise of the market and focus only on significant statistical groupings.

| Algorithm | Best Use Case | Computational Complexity | Handling of Outliers |

|---|---|---|---|

| K-Means | Fast grouping of large datasets. | Low (Linear) | Poor (Outliers pull centroids). |

| Hierarchical | Understanding deep asset relationships. | High (Quadratic) | Good (Visible in dendrogram). |

| DBSCAN | Regime detection and anomaly hunting. | Medium | Excellent (Explicitly labels noise). |

| Gaussian Mixture | Probabilistic risk management. | Medium to High | Moderate (Probabilistic). |

Gaussian Mixtures and Regime Detection

A major challenge in US capital markets is Regime Change. A strategy that works in a "Low Volatility" regime will fail catastrophically in a "High Volatility" regime. Gaussian Mixture Models (GMM) treat clusters as a mixture of multiple normal distributions.

GMM provides a probability of an asset belonging to a cluster. An algorithm might determine there is a 70% probability we are in a "Bull Trend" and a 30% probability we are entering a "Crash Regime." This allows for a "Soft Clustering" approach where the quant can gradually reduce leverage as the probability of the crash regime increases, rather than waiting for a binary "buy/sell" signal.

Overcoming the Curse of Dimensionality

In computational finance, the more features you add, the more difficult it becomes to cluster them accurately. This is known as the Curse of Dimensionality. As the number of features (dimensions) increases, the distance between any two assets starts to look the same, making clusters meaningless.

To solve this, quants use Dimensionality Reduction techniques like Principal Component Analysis (PCA) or t-SNE before clustering. By projecting 500 different financial ratios down into 5 or 10 "Principal Components," the algorithm can find clusters in a dense, information-rich space without the noise of irrelevant variables.

The Silhouette Score

How do we know if our clusters are actually good? Quants use the Silhouette Score to measure how similar an asset is to its own cluster compared to other clusters.

Score = (b - a) / Max(a, b)Where a is the average distance to its own cluster and b is the distance to the nearest neighboring cluster. A score near 1.0 indicates perfect separation, while a score near 0 indicates overlapping, risky asset groups.

Clustering for Diversification and Risk

The primary use of clustering for an investment expert is Risk Diversification. Traditional "60/40" portfolios often suffer from "hidden correlations." If you own ten tech stocks, you aren't diversified. Even if you own a tech stock and a banking stock, they might both be in the "High Interest Rate Sensitivity" cluster.

Clustering identifies these hidden bonds. A truly diversified portfolio selects one asset from each distinct cluster. This ensures that the portfolio has exposure to different market drivers. If the "Growth Cluster" suffers, the "Value Cluster" or the "Inflation-Protected Cluster" might provide the necessary hedge.

- Cluster-Based Risk Parity: Allocating capital so that each cluster contributes an equal amount of risk to the total portfolio volatility.

- Pairs Trading Enhancements: Only searching for statistical arbitrage opportunities (pairs) within the same dense cluster, which significantly increases the cointegration probability.

- Tail Risk Hedging: Identifying the "Crash Cluster"—assets that historically spike in value when the broader market clusters collapse.

The Technical Quant Stack

Implementing these strategies requires a robust technical framework. In the US market, Python is the undisputed leader for machine learning in finance. Quants utilize the Scikit-Learn library for K-Means and DBSCAN, while using SciPy for Hierarchical clustering.

For data handling, Pandas manages the time-series return data, and Numpy handles the heavy matrix algebra required for distance calculations. Higher-tier funds often utilize GPU Acceleration via libraries like PyTorch or RAPIDS to cluster thousands of assets in real-time, allowing them to detect regime shifts in the milliseconds before they manifest in the broad market indices.

In conclusion, algorithmic clustering strategies represent the move from "Intuition" to "Inference." By allowing the data to speak for itself, traders can bypass the biases of traditional industry labels and see the market as it truly exists—a shifting, breathing landscape of statistical relationships. As global markets grow more complex and interconnected, the ability to map these relationships through machine learning will remain a non-negotiable requirement for institutional success.

Expert Summary

The market is a non-stationary beast. Static sectors are for retail charts. Professional quants use Adaptive Clustering to redefine the market every day. By grouping assets through the lens of pure data, you don't just follow the market—you understand the DNA of its movement.