Architecting Algorithmic Trading Systems

Phase 1: Hypothesis and Alpha Discovery

The construction of a successful trading model does not begin with code; it begins with an observation. Professional practitioners look for market inefficiencies—recurring anomalies where price behavior deviates from random walk theory. This is known as alpha discovery. Whether it is a lead-lag relationship between correlated assets or a specific institutional flow pattern during market opens, your model must be rooted in a robust economic rationale.

A common mistake for novices is attempting to find patterns through raw data mining without a prior hypothesis. This often leads to spurious correlations that vanish in live markets. Instead, a systematic trader identifies a fundamental reason why an anomaly should exist, such as liquidity constraints, behavioral biases among retail participants, or structural imbalances in futures curves.

Phase 2: High-Fidelity Data Pipelines

In algorithmic trading, your model is only as good as the data it consumes. Building a robust data pipeline is perhaps the most labor-intensive part of the process. You must decide on the granularity of data required: daily OHLCV (Open, High, Low, Close, Volume) might suffice for swing strategies, but tick-by-tick or Level 2 order book data is mandatory for high-frequency execution.

Data cleaning is the hidden pillar of systematic success. You must account for corporate actions like stock splits and dividends, which can create artificial price gaps. Furthermore, "bad ticks" or missing data points must be handled using sophisticated interpolation techniques rather than simply ignoring them, as these gaps can break technical indicators and lead to erroneous signals.

| Data Source Type | Strategic Utility | Engineering Complexity |

|---|---|---|

| Standard Price/Volume | Foundational for trend and momentum. | Moderate |

| Alternative Data | Satellite imagery, credit card flows. | Very High |

| Sentiment Feeds | NLP processing of news and social media. | High |

| Macro Indicators | Fed dates, CPI releases, yield curves. | Low-Moderate |

Phase 3: Tactical Logic and Strategy Design

With a hypothesis and clean data, you must now define the exact entry and exit parameters. This is the logic mapping phase. A professional model is composed of three distinct logical layers: the Entry Trigger, the Exit Logic, and the Position Sizing engine. Every rule must be binary and quantifiable; there is no room for "discretion" in a systematic model.

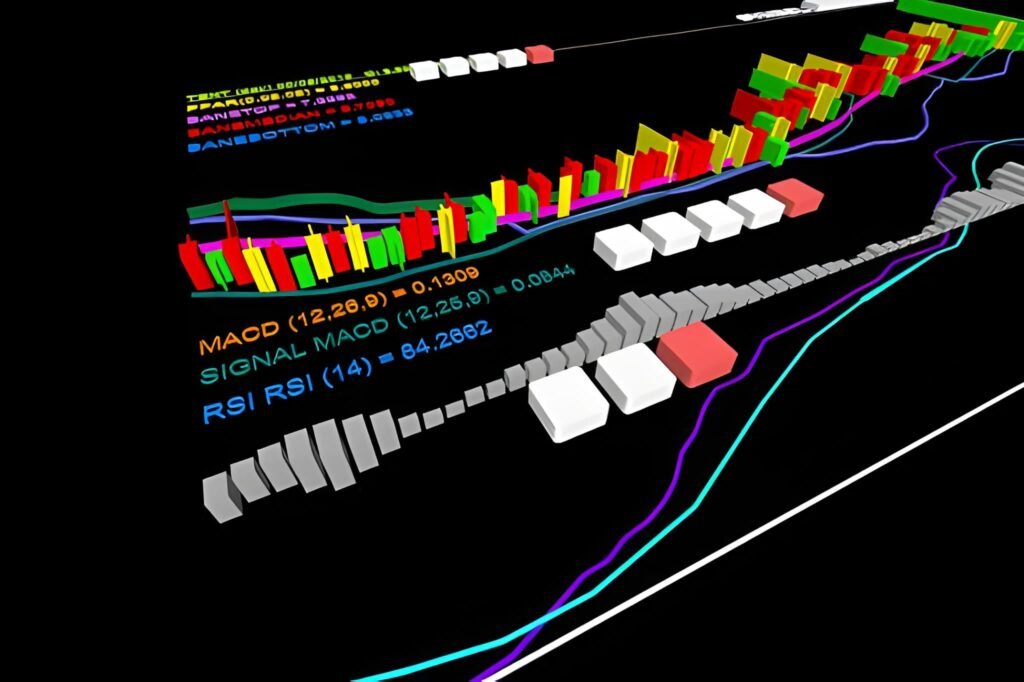

Modern strategies often utilize non-linear logic through machine learning architectures, such as Random Forests or Gradient Boosted Trees, to weigh different features. For example, a model might buy only if the RSI is below 30 and the 2-year yield is flattening and there is a positive order flow imbalance on the bid. This multi-factor approach increases the probability of capturing genuine signal over noise.

Phase 4: Scientific Backtesting and Validation

Backtesting is the process of simulating your strategy against historical data. However, the goal is not to find a set of parameters that produced the highest returns; the goal is to find a model that is robust. The greatest danger in this phase is overfitting (curve-fitting), where the model learns the noise of the past rather than the logic of the market.

Practitioners utilize Walk-Forward Analysis and Cross-Validation to mitigate this risk. This involves training the model on one period of data and testing it on an entirely different, unseen period. If the performance degrades significantly during the "out-of-sample" test, the model is likely overfitted and will fail in live trading.

Phase 5: Risk Management and Capital Safety

A professional algorithmic model is as much a risk management system as it is a profit-seeking one. You must define hard guardrails that operate independently of the alpha logic. This includes maximum position sizing (e.g., the Kelly Criterion), daily loss limits, and exposure caps by sector or asset class.

Sophisticated risk modules also account for tail risk. If the market experiences a "Black Swan" event, such as a flash crash or a geopolitical shock, the model must have an automated "kill switch" that liquidates positions or moves to cash when certain volatility thresholds are breached. In systematic trading, survival is the prerequisite for performance.

Phase 6: Infrastructure and Connectivity

Transitioning from a backtest to a live environment requires a robust execution engine. You must connect your model to a broker or an exchange via an API (Application Programming Interface). For retail traders, REST APIs are common, but professional HFT (High-Frequency) firms utilize FIX (Financial Information eXchange) protocols for ultra-low latency.

Slippage control is critical in this phase. The "price on the screen" in a backtest is rarely the price you get in a live market. Your execution engine must use smart order types, such as limit orders or iceberg orders, to minimize market impact. A model with a 1% edge can be rendered unprofitable by 0.5% slippage on every trade.

Phase 7: Monitoring, Decay, and Optimization

Once a model is live, the work has only just begun. All algorithmic models experience Alpha Decay—the phenomenon where an edge gradually disappears as more participants discover and exploit the same anomaly. You must implement real-time monitoring to track "slippage vs. expectation" and "realized vs. backtested volatility."

If the realized performance starts to deviate significantly from the backtest (outside of a 95% confidence interval), the model must be taken offline for recalibration. This is known as regime detection. A model designed for a low-interest-rate environment may fail when rates begin to rise, requiring a fundamental adjustment to the underlying logic.

The Final Systematic Conclusion

Building an algorithmic trading model is an exercise in scientific discipline and psychological resilience. The complexity of the market means that no model is ever "finished." Success requires a relentless cycle of hypothesis testing, data verification, and risk management. For the professional practitioner, the goal is not to predict the future, but to build a system that can manage probabilities with clinical precision. Start with clean data, prioritize risk over profit, and never stop questioning the validity of your signals. The machine is your tool, but the market is the final judge of your logic.